Description:

- Introduction

- What Smallest.ai Actually Is

- What Smallest.ai Does Best

- Core Features and Capabilities

- Lightning TTS Is the Main Product to Understand

- Voice Agents and Enterprise Workflows

- Workflow and Ease of Use

- Models and Platform Layers That Matter

- Voice Quality and Control

- Integrations and Developer Fit

- Best Use Cases

- Comparison to Other AI Voice Tools

- Practical Tips

- Limitations and Trade-Offs

- Final Takeaway

Smallest.ai is a voice AI platform built around speed. Its clearest strength is low-latency text-to-speech for real-time voice agents, with a broader product direction that includes voice cloning, speech-to-text, SDKs, enterprise voice agents, and deployment options for teams building AI phone, support, IVR, and conversational voice systems.

Smallest.ai is not mainly a creator voiceover studio. It is closer to a developer-first voice infrastructure platform.

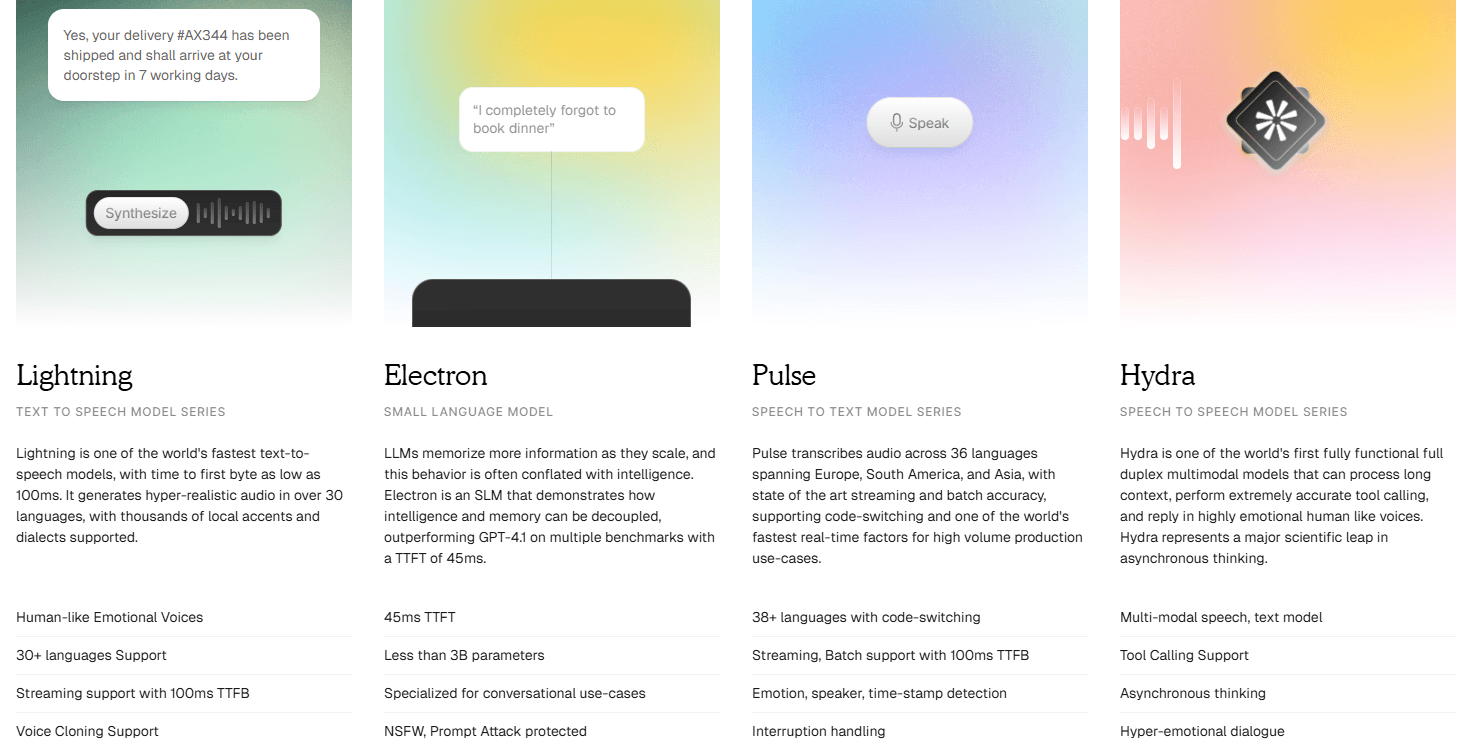

The product is built around Waves, its voice model platform, and Lightning, its text-to-speech model series. Smallest.ai describes Lightning as one of the world’s fastest TTS models, with time to first byte as low as 100ms, streaming support, voice cloning support, and multilingual coverage. Its homepage currently describes Lightning as supporting 30+ languages, while its dedicated Lightning V3.1 page lists 15 supported languages for that specific version. That difference matters because the broader product family and the current model-specific support are not described in exactly the same way across every page.

The easiest way to understand Smallest.ai is through three layers:

| Layer | What it does | Why it matters |

|---|---|---|

| Lightning TTS | Converts text into fast, natural-sounding speech. | Main reason to use Smallest.ai for voice agents and live applications. |

| Voice Agents | Enterprise-ready agents for inbound and outbound calls. | Useful for support, sales, collections, recruiting, healthcare, and operations. |

| Developer Infrastructure | APIs, SDKs, WebSocket/SSE streaming, and integrations. | Lets teams plug Smallest.ai into real voice systems instead of only using a web demo. |

That positioning is important. Smallest.ai is not trying to win only on “nice voices.” It is trying to win on voice systems that need to speak quickly, sound natural, handle real workflows, and fit into developer pipelines.

Smallest.ai is strongest when latency matters.

For normal narration, a few extra seconds of generation time may not matter. For a live voice agent, it does. A customer can feel even small delays during a phone call. That is why Smallest.ai’s focus on fast TTS makes sense. Its docs describe the Lightning TTS API as converting text into natural speech with roughly 100ms latency, 44.1 kHz native sample rate, sync generation, SSE, and WebSocket streaming.

That makes Smallest.ai especially relevant for AI receptionists, customer support voice agents, outbound sales or reminder calls, IVR systems, voice agent platforms that need a TTS layer, and live conversational applications where response delay breaks the experience.

Its dedicated text-to-speech page also frames Lightning around real-time agents, audiobooks, podcast production, gaming dialogue, accessibility tools, and any application “where the voice is the product.” The most practical takeaway is this: Smallest.ai is less about making a polished one-off narration file and more about making voice generation responsive enough for real-time interaction.

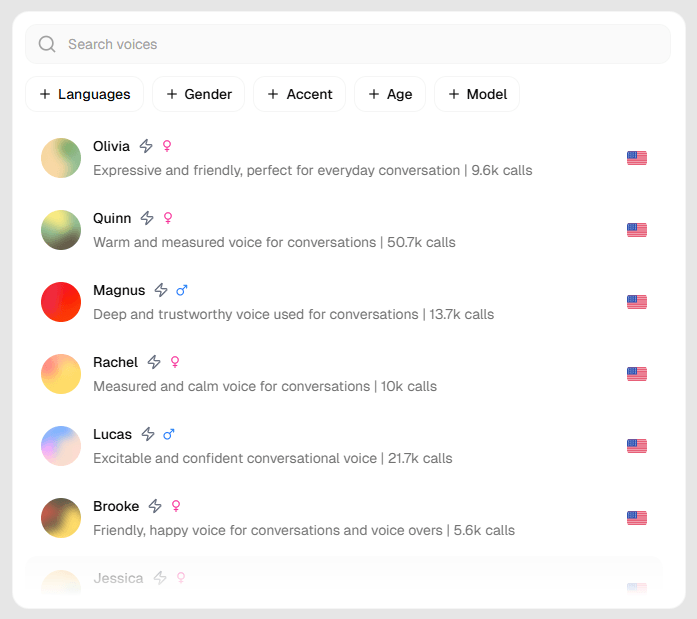

Fast speech generation for voice agents, IVR, narration, and real-time applications, with streaming support and low-latency positioning.

Smallest.ai’s current conversational TTS model, described as its most natural-sounding model to date, with automatic language detection and mid-sentence language switching.

Built into the product direction for creating production-ready synthetic voices and personalized agent voices.

Enterprise-ready AI agents for inbound and outbound calls, with support for parallel call handling and workflow customization.

Python, Node.js, REST APIs, and telephony integration support are positioned as part of the voice agent workflow.

Smallest.ai’s Waves platform can be used as a TTS provider inside LiveKit Agents through an official plugin path.

Lightning is the center of Smallest.ai’s current public identity.

The model is built for speed first, but the company is also pushing naturalness and conversation quality. The dedicated Lightning TTS page says it adapts across use cases from real-time agents to long-form narration, and it highlights voice agents, gaming, audiobooks, and other production contexts.

Lightning V3.1 is especially important because it is positioned specifically for conversational voice agents. Smallest.ai says Lightning V3.1 supports English, Spanish, French, Italian, Dutch, Swedish, Portuguese, German, Hindi, Tamil, Kannada, Telugu, Malayalam, Marathi, and Gujarati, with automatic language detection and mid-sentence language switching.

That mid-sentence switching point is more useful than it sounds. Many real customer conversations do not stay neatly inside one language. This is especially true in India, multilingual support centers, immigrant communities, travel, healthcare intake, and customer service environments where speakers naturally mix languages. A voice system that can handle language switching more naturally has a better chance of feeling usable in real conversations.

The caveat is that the website currently describes broader Lightning support as 30+ languages, while the Lightning V3.1 FAQ and announcement name 15 languages for that model. For a production buyer, the safest interpretation is: check the exact model and language you plan to deploy, rather than assuming every listed language works equally across every Smallest.ai surface.

Smallest.ai’s voice agent product is where the platform becomes more than a TTS API.

The company describes its agents as enterprise-ready and custom-trained on customer data. The voice agent page emphasizes several practical areas: complex SOP handling, parallel call volume, number and acronym handling, performance evaluation, multilingual support, and SDK/API integration.

That is the right set of concerns for real AI calling systems. A demo voice agent can sound impressive for a minute. A production voice agent has to handle messy edge cases, interruptions, names, numbers, accents, unclear intent, compliance steps, transfer rules, and failure states.

Smallest.ai also calls out specific use cases across accessibility, content, healthcare, media, recruiting, sales, small business, and support. That broad positioning makes sense, but the strongest fit is still operational voice automation: places where repeated phone conversations can be partially or fully automated.

Smallest.ai has two very different user experiences.

For developers, the workflow is straightforward. Get an API key, call the Waves API, select the model or voice, stream or generate audio, and integrate it into a voice pipeline. The documentation describes the Lightning TTS API endpoint, authentication through a bearer token, and quickstart-style setup.

For voice agent teams, the workflow is more involved. You are not just generating audio. You are designing a full conversational system: telephony, speech-to-text, TTS, LLM behavior, business rules, call transfer logic, analytics, latency monitoring, and QA. Smallest.ai’s official Node SDK description reflects this split by saying developers can use Waves for TTS directly or use Atoms to build and operate end-to-end enterprise-ready voice agents.

For non-technical creators, Smallest.ai may feel less immediately friendly than tools built around a polished studio interface. Its strongest value shows up when it is embedded into an app, product, call workflow, or voice agent stack.

Smallest.ai’s naming can feel slightly confusing at first because the public pages reference both products and model names. The main practical split looks like this:

| Name | Role | Best For |

|---|---|---|

| Waves | Voice model/API platform | Developers integrating voice generation. |

| Lightning | Text-to-speech model series | Fast speech for voice agents and real-time use. |

| Lightning V3.1 | Current conversational TTS model | Natural-sounding multilingual voice agents. |

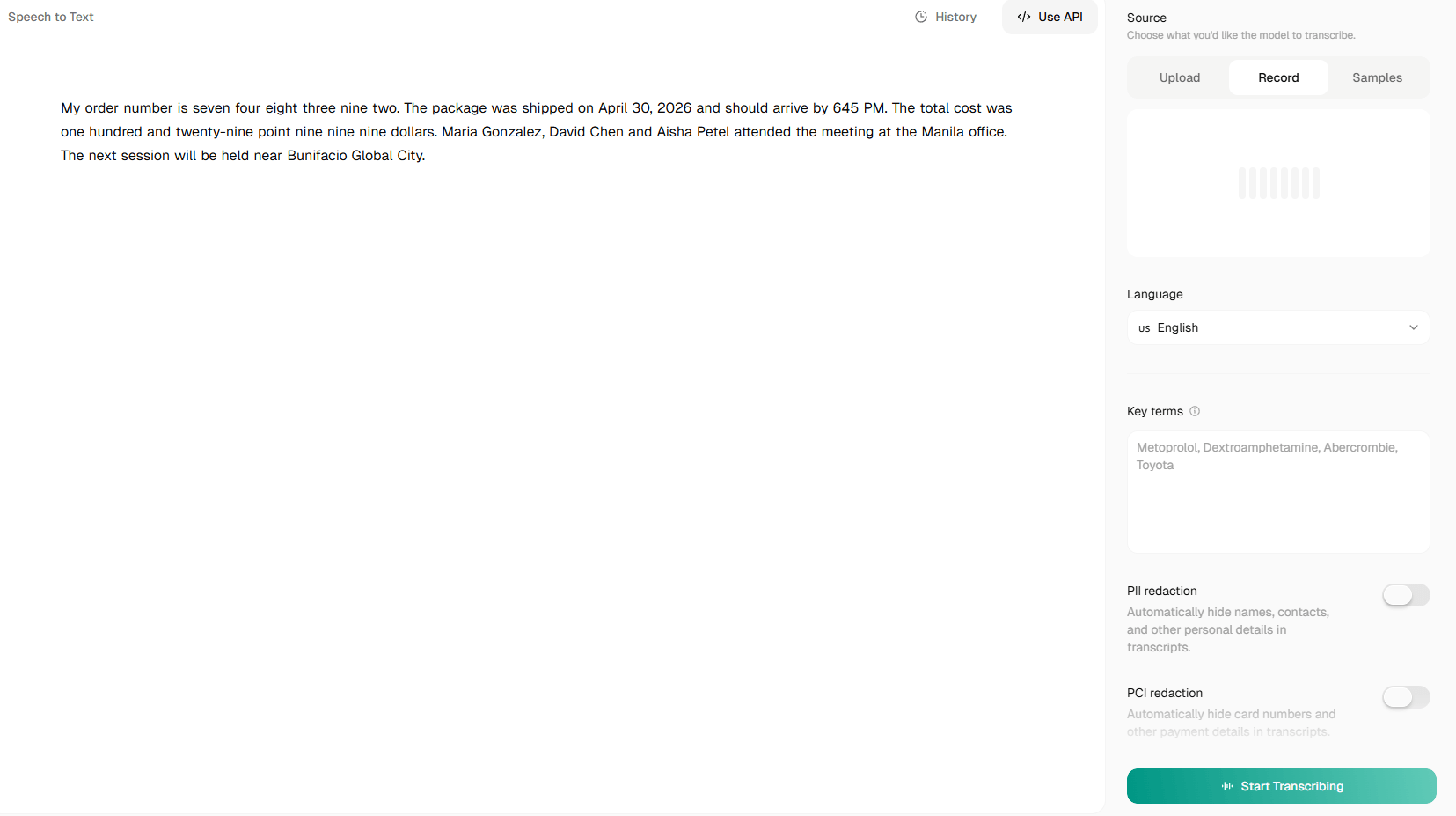

| Pulse | Speech-to-text direction in Smallest.ai’s ecosystem | Real-time transcription and ASR workflows. |

| Atoms | Agent-building layer referenced in SDK material | End-to-end voice agent operations. |

| Voice Agents | Managed enterprise agent product | Inbound/outbound automation at scale. |

Lightning is the product most users should evaluate first because it is the clearest and most mature public value proposition. Voice Agents matter if the buyer wants Smallest.ai to provide more of the full calling workflow, not just speech generation.

Pulse and Atoms are also important, but buyers should verify current public availability and docs before making assumptions. Some Smallest.ai pages list products like Pulse, Hydra, Voice Agents, and Voice Cloning in navigation with “Coming Soon” text in places, while the SDK and voice agent pages already reference parts of the broader stack. That does not mean the platform is not usable, but it does mean buyers should confirm which components are self-serve, sales-led, generally available, or still rolling out.

Smallest.ai’s voice quality is aimed at conversation rather than only polished narration.

That distinction matters. Audiobook narration can tolerate a more deliberate cadence. A phone agent needs speech that feels responsive, natural, and not overly theatrical. Smallest.ai’s Lightning page highlights conversational AI that sounds like a real person rather than a script reader, while its Lightning V3.1 announcement frames the model around natural-sounding conversational TTS.

The voice agent page also specifically mentions handling complex acronyms and numbers such as credit cards and phone numbers with pacing and intonation. That is a practical strength for real phone workflows, where digits, confirmations, account numbers, dates, addresses, and IDs often make or break the call experience.

Where Smallest.ai may feel less dominant is in creator-style emotional direction. Tools like ElevenLabs are better known for expansive voice libraries, creator workflows, dubbing, and expressive narration. Smallest.ai’s advantage is more technical: fast generation, agent-readiness, and conversational deployment.

Smallest.ai is clearly aimed at developers building voice products.

Its voice agent page lists Python, Node.js, and REST APIs for integration into systems and telephony. Its official Node SDK says Smallest AI offers an end-to-end voice AI suite for developers building real-time voice agents. LiveKit’s documentation also includes a Smallest AI plugin that uses the Waves platform as a TTS provider for LiveKit Agents.

That matters because voice agents are rarely built from one tool alone. A real stack may include telephony, speech-to-text, LLM orchestration, text-to-speech, CRM or support-system actions, analytics and call review, and human handoff.

Smallest.ai fits best when it can act as the fast voice layer inside that broader system, or when a company wants its managed voice agent product to handle more of the workflow.

- AI phone agents: Smallest.ai is a strong fit for companies building agents for customer support, scheduling, collections, recruiting, reminders, lead qualification, and follow-up calls.

- IVR and customer support automation: Lightning’s low-latency TTS and number/acronym handling make it useful for phone menus, account workflows, and support conversations.

- Voice agent platforms: Teams building their own agent infrastructure can use Smallest.ai as a TTS layer, especially where streaming speed matters.

- Multilingual customer operations: Lightning V3.1’s listed language support and mid-sentence language switching make it useful for regions where multilingual speech is normal.

- Gaming and character dialogue: The Lightning page lists gaming dialogue as a use case, and fast TTS can be useful for dynamic NPC speech or live interactive experiences.

- Accessibility tools: Fast text-to-speech can support assistive reading, spoken interfaces, and accessibility-focused voice products.

- Content and narration workflows: Smallest.ai can be used for audiobooks, podcasts, and long-form narration, though this is not where its differentiation is sharpest compared with creator-first voice platforms.

| Tool | Strongest Fit | Where Smallest.ai Stands |

|---|---|---|

| ElevenLabs | Expressive TTS, voice cloning, dubbing, creator narration. | ElevenLabs is stronger for creator voice production; Smallest.ai is more focused on fast agent-ready TTS and real-time deployment. |

| Deepgram | Speech-to-text, TTS, voice AI infrastructure. | Deepgram is broader on transcription infrastructure; Smallest.ai’s clearest pitch is low-latency conversational TTS and agent voice. |

| PlayHT | TTS and voice cloning for content and apps. | PlayHT is creator/developer hybrid; Smallest.ai feels more optimized around live voice agents. |

| OpenAI Realtime-style voice stacks | LLM-native voice interaction. | OpenAI-style systems are compelling when reasoning is central; Smallest.ai is useful when fast voice output and telephony deployment matter. |

| LiveKit + voice model providers | Custom real-time voice stacks. | Smallest.ai can slot into this ecosystem as a Waves TTS provider through LiveKit’s plugin path. |

The simple version: choose Smallest.ai when you care most about fast, natural TTS for real-time voice agents. Choose ElevenLabs when expressive narration and voice cloning depth matter more. Choose Deepgram when speech-to-text infrastructure is the main decision. Choose a full-stack real-time AI platform when you want the LLM, voice, and agent runtime tightly bundled.

- Test with real call scripts, not clean marketing sentences. Voice agent speech needs to handle names, numbers, confirmations, short replies, hesitation, and repetitive phrases.

- Evaluate latency across the full pipeline. Smallest.ai may generate speech quickly, but the user experience depends on STT, LLM response time, TTS, streaming, and telephony together.

- Check the exact language and model before committing. Public pages mention different language counts depending on the product surface, so validate the specific model you plan to use.

- Use streaming for real-time interaction. Sync generation is fine for clips, but WebSocket or SSE workflows matter when the user is waiting in a live conversation.

- Pay special attention to numbers and acronyms. Smallest.ai highlights this as a voice agent strength, but every business has its own account numbers, product codes, abbreviations, and compliance wording.

- Treat voice agents as workflows, not demos. The speech model is only one part. You still need escalation paths, call outcome logging, QA, compliance, and fallback behavior.

- Use LiveKit if you are already building real-time agent pipelines there. The official LiveKit plugin path makes Smallest.ai easier to test as a TTS provider inside that ecosystem.

- Smallest.ai’s biggest limitation is product clarity. The core Lightning TTS story is clear, but the broader ecosystem includes names like Waves, Atoms, Pulse, Hydra, Voice Agents, and Voice Cloning, and some pages show “Coming Soon” text in navigation areas while other docs or SDK references suggest active integration paths. Buyers should verify availability before planning around every named component.

- The second limitation is that Smallest.ai is more developer- and enterprise-oriented than creator-oriented. If someone wants a polished browser studio for YouTube voiceovers, dubbing, or voice acting, they may prefer a tool with more visible editing controls, project timelines, and voice libraries.

- The third limitation is that fast TTS is only one part of a good voice agent. A slow LLM, weak call routing, poor speech recognition, bad prompts, or missing business logic can still ruin the experience. Smallest.ai can help with the voice layer, but it does not automatically solve the full conversational design problem.

- There is also some ambiguity around language coverage. The homepage describes 30+ language support for Lightning broadly, the docs page describes 80+ voices across 4 languages for the Lightning API overview, and the Lightning V3.1 announcement and FAQ list 15 languages for that version. This is not necessarily contradictory, but it does mean teams should test the specific endpoint, model, language, and voice they intend to ship.

- Finally, voice agent deployments need safety and consent controls. Any platform that supports voice cloning and automated calls can be misused if companies do not handle disclosure, caller consent, data retention, escalation, and compliance properly.

Smallest.ai is best for teams building real-time voice products, especially AI phone agents and conversational systems where low-latency text-to-speech matters.

Its strongest advantage is Lightning: fast, natural-sounding TTS built for live interaction, with streaming support, voice cloning direction, multilingual coverage, and developer-friendly integration paths. It is especially useful for support, sales, IVR, healthcare communication, recruiting, accessibility, and voice agent platforms.

The main caveat is that Smallest.ai is not the cleanest fit for casual creators who just want a simple voiceover studio. It is most valuable when voice is part of a real product or operational workflow, and when the buyer is willing to test latency, language support, integration behavior, and call quality under production-like conditions.

TAGS: Text to Speech

Related Tools:

Converts texts to podcasts

Specializes in converting text to speech

Generates synchronized audio for video scenes

Generates realistic speech and powers voice-based applications

Cloud-based document editing and collaboration tool

AI voice generation, dubbing, cloning, and voice workflow tools