Description:

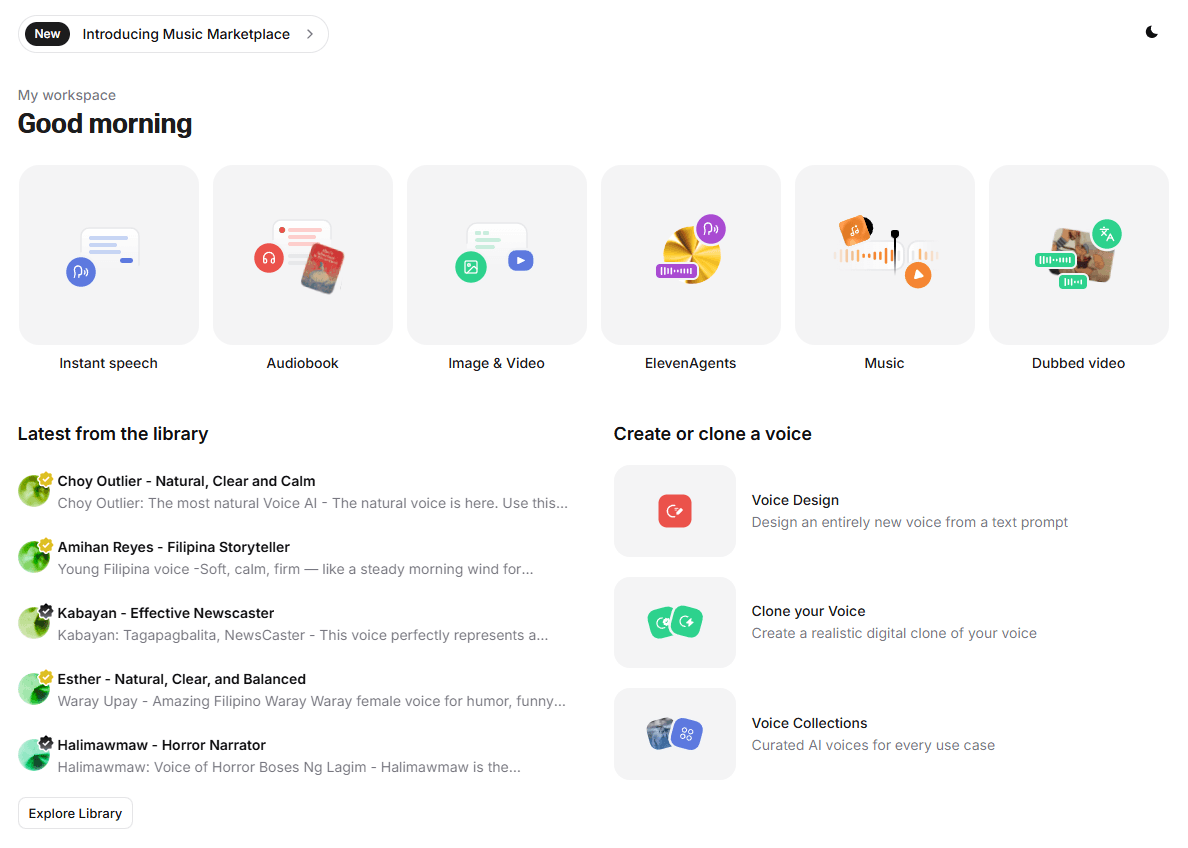

ElevenLabs has grown from “the AI voice app people use for realistic narration” into a broader audio platform for creators, publishers, product teams, and developers.

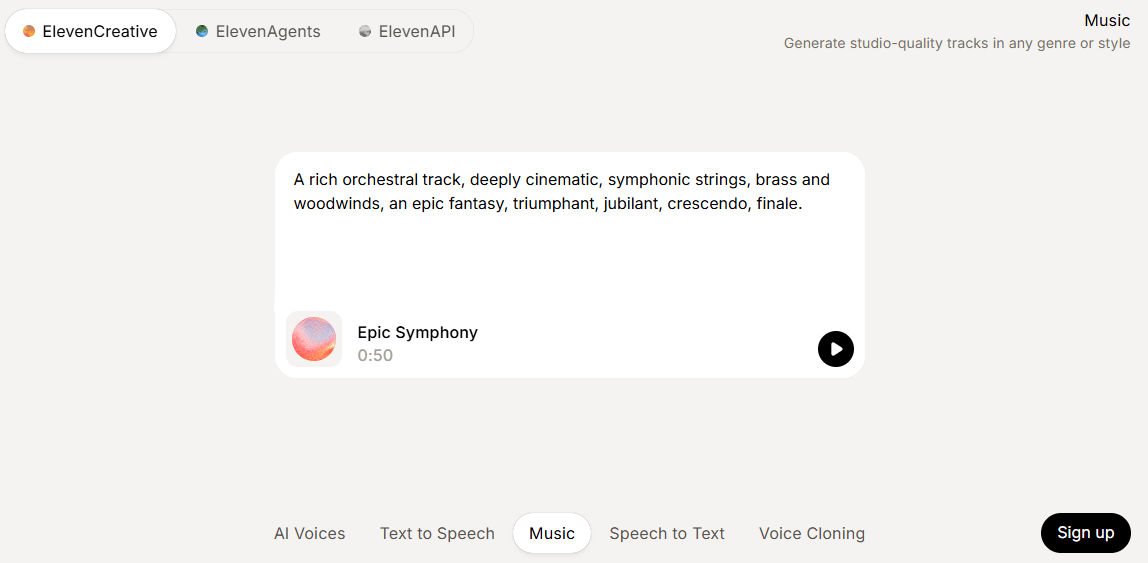

It now covers text-to-speech, transcription, dubbing, voice cloning, voice changing, voice cleanup, conversational agents, and even music and sound effects. That expansion makes it more powerful than a simple voice generator, but it also means the product now has several layers you need to understand before deciding whether it is the right fit.

ElevenLabs is strongest in four areas.

First, it still does realistic AI speech extremely well. Its current TTS stack is built around several models, and Eleven v3 is now the company’s flagship expressive model. ElevenLabs says Eleven v3 is its most advanced text-to-speech model and that the GA release improved stability and reduced errors around numbers, symbols, and specialized notation.

Second, it has become a serious localization tool. Its dubbing workflow supports interactive editing in the UI, API-based automation, and a human-verified production option. That matters because many AI voice tools can generate speech, but fewer give you a practical path from “video in one language” to “localized version I can actually ship.”

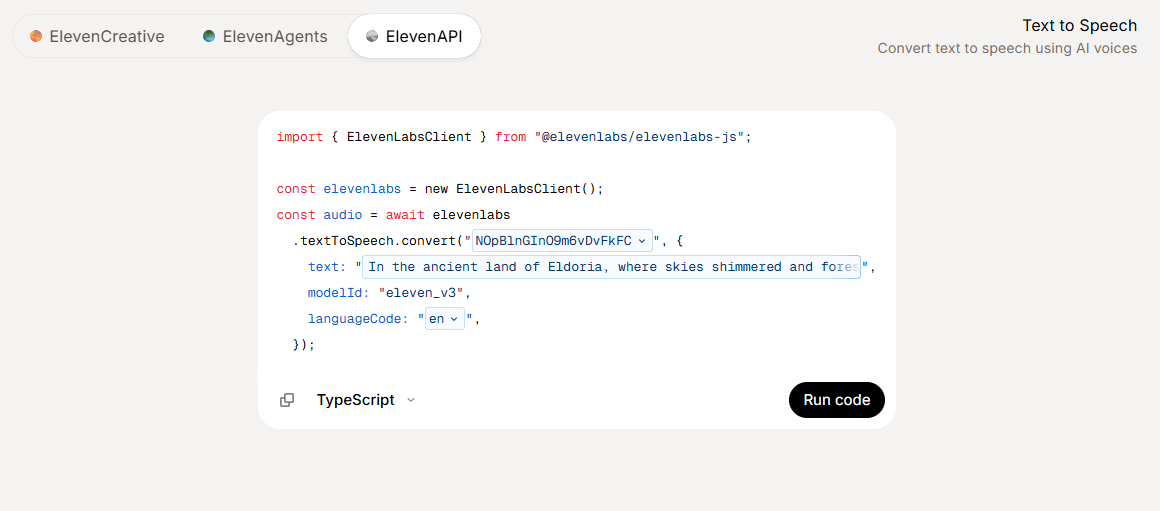

Third, it is now useful for both creators and developers. The web app covers no-code voiceover work, while the API stack supports text-to-speech, speech-to-text, dubbing, sound effects, music, and agents.

Fourth, the product has matured into a broader audio workflow platform. Voiceover Studio, Voice Library, voice cloning, transcription, voice isolation, and agents give ElevenLabs more depth than a one-button narrator app.

High-quality speech generation with nuanced pacing, emotion, and multilingual support.

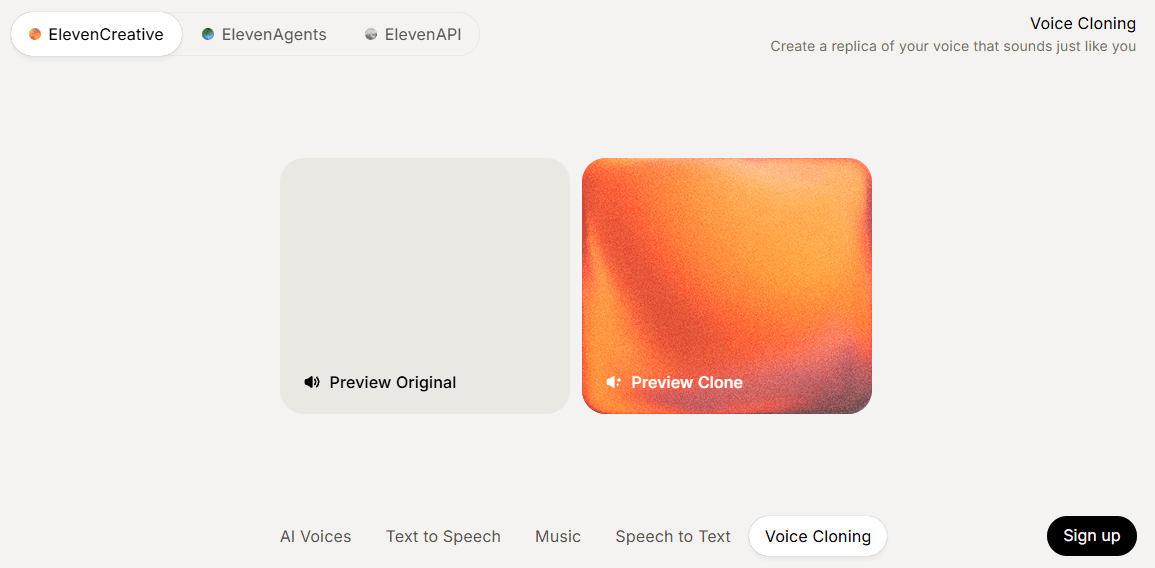

Instant Voice Cloning for fast setup and Professional Voice Cloning for higher-fidelity replicas with better long-term production value.

Translate and relaunch audio or video with speaker separation, editable transcripts, and preserved vocal identity.

Scribe v2 for accuracy-first batch transcription and Scribe v2 Realtime for live, low-latency applications.

Useful supporting tools for polishing or transforming recorded audio rather than generating from scratch.

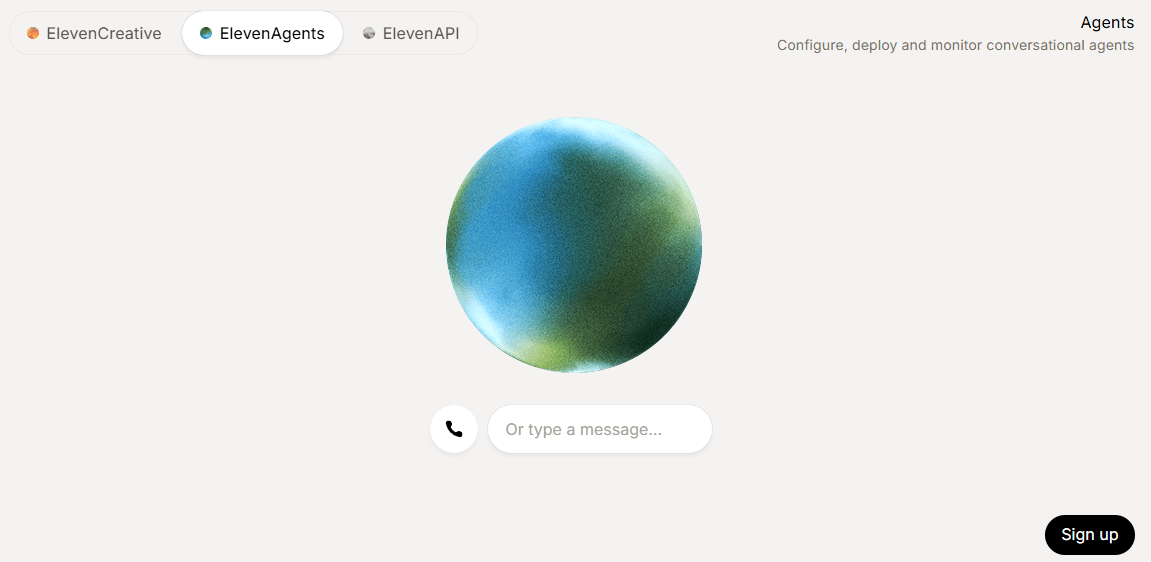

A real-time agent layer for phone, chat, email, and WhatsApp workflows, plus analytics, testing, workflows, and guardrails.

The easiest way to understand ElevenLabs is to split it into three product lanes.

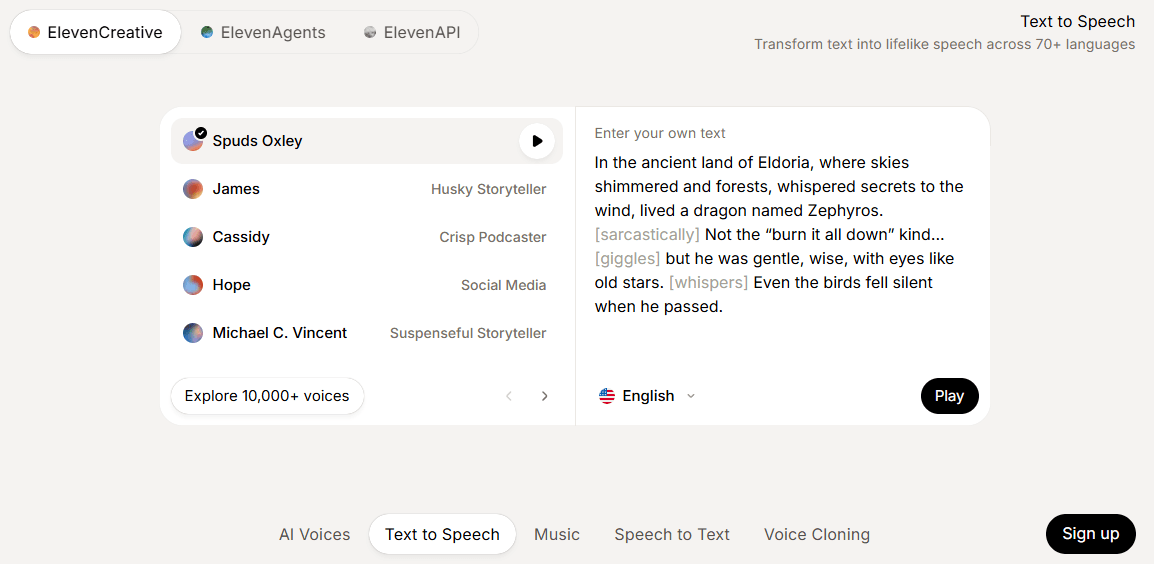

The first lane is quick generation. If you just want a voiceover, the platform is straightforward: paste text, pick a voice, generate, and export. For solo creators, this is still the cleanest entry point and the reason ElevenLabs remains popular.

The second lane is production workflow. This is where Voiceover Studio and Dubbing Studio matter. Voiceover Studio uses a timeline-based approach, lets you work with multiple speakers, and supports sound effects on the timeline. Dubbing Studio goes beyond one-click translation by letting you edit transcripts and translations, adjust voice delivery, and regenerate segments until the result sounds right.

The third lane is infrastructure. This is the developer-facing side: APIs, speech recognition, agents, and automation. ElevenLabs is no longer only for YouTubers or audiobook experiments. It is increasingly a voice layer companies can build into support, product, transcription, and multilingual content systems.

For most buyers, four model choices matter most.

Eleven v3 is the premium choice when expressiveness and delivery quality matter most. It is the company’s current flagship TTS model, and ElevenLabs says the GA version improved stability and reduced interpretation errors substantially.

Flash v2.5 matters when latency matters more than maximum expressiveness. ElevenLabs describes Flash as an ultra-low-latency TTS model with 75 ms model latency plus network and application latency.

Scribe v2 is the batch transcription model. It is optimized for long-form recordings, subtitling, and captioning, with accuracy as the main priority.

Scribe v2 Realtime is the live transcription model. It is the better pick for agents, notetakers, or live multilingual apps because it is designed for streaming and low latency, with partial transcriptions around 150 ms.

This is still the biggest reason to use ElevenLabs.

The platform’s speech quality is usually strongest when you want natural pacing, believable cadence, and a voice that does not immediately sound synthetic. ElevenLabs’ docs position the TTS stack around nuanced intonation, pacing, and emotional awareness, and that still matches the platform’s core reputation.

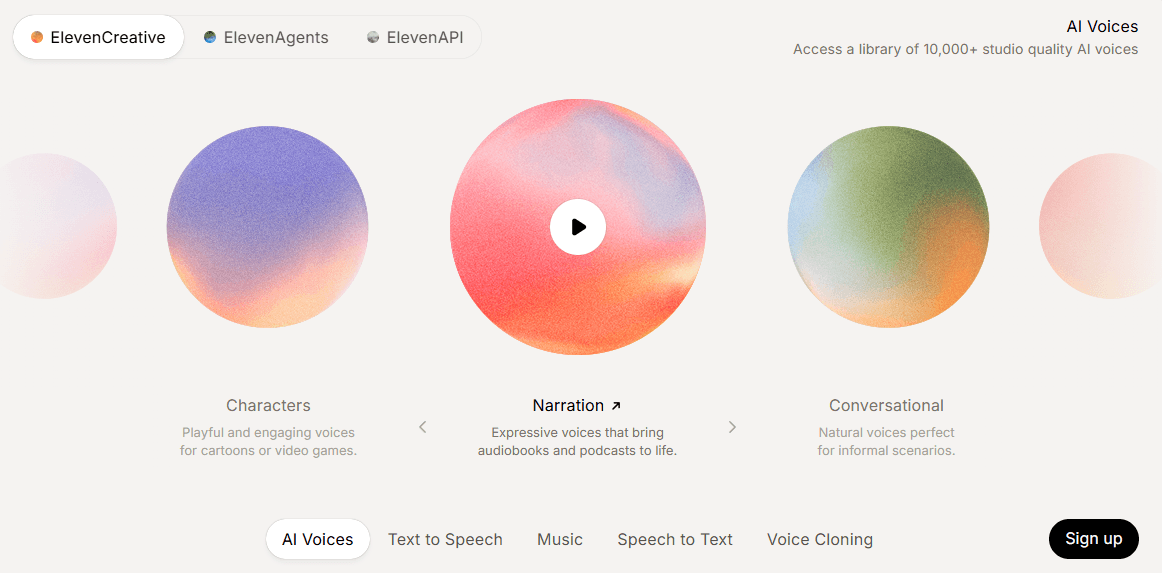

Where it gets more interesting is control. You are not limited to one stock voice list. ElevenLabs offers default voices, a large voice library, voice design for synthetic voices, and two levels of cloning. The Voice Library is now a marketplace for professional voice clones, and the broader creative docs describe access to 10,000+ pre-made voices or the option to clone your own.

The big practical distinction is between Instant Voice Cloning and Professional Voice Cloning. Instant is faster and more accessible. Professional is the higher-fidelity route if voice identity really matters. ElevenLabs’ own troubleshooting guidance recommends 1–2 minutes of consistent audio for instant cloning, versus at least 30 minutes and ideally 2+ hours for professional cloning.

This is one of the most commercially useful parts of the platform.

ElevenLabs dubbing supports three delivery modes: Dubbing Studio in the UI, API-based workflows, and human-verified dubs through Productions. The UI supports files up to 500 MB and 45 minutes, while the API supports up to 1 GB and 2.5 hours. It also supports speaker separation, editable transcripts, preserved original voice characteristics, and background-audio preservation.

That is a real advantage over simpler “translate my video” tools. The key value is not just translation. It is the ability to correct transcripts, tune delivery, regenerate segments, and treat localization like an editable workflow rather than a one-shot gamble.

- Audiobook narration, YouTube voiceovers, and podcasts: strong fit when spoken delivery quality is part of the product, not an afterthought.

- Training content, product explainers, and multilingual ad production: especially useful when the workflow depends on clear delivery plus scalable voice generation.

- Localization teams: Dubbing Studio plus editable transcript and translation control make it much more practical than a basic TTS tool for repurposing video into more markets.

- Developers building agents and live voice workflows: the platform is increasingly compelling for products that need speech, transcription, and action-taking inside the same voice stack.

- Transcription-heavy products: Scribe v2 and Scribe v2 Realtime give ElevenLabs a more complete speech stack than competitors focused only on generation or only on recognition.

- Use Eleven v3 when delivery quality is the goal, and treat Flash more as the speed-first option.

- Use Instant Voice Cloning for rough internal work or early tests, but move to Professional Voice Cloning if the cloned identity will be client-facing or central to the project.

- For dubbing, plan on editing transcripts and translations instead of assuming the first automated pass is final. The product is clearly designed for that correction loop.

- Use Voice Isolator before cloning or publishing if your source material is noisy. Clean inputs help almost every downstream voice workflow.

The biggest trade-off is that ElevenLabs is no longer one simple product. It is a platform. That is good for serious users, but it also means some people who just want “type text, get mp3” may find the broader product a bit sprawling.

The second limitation is that the best features are not equally accessible on every plan. Professional Voice Cloning, better API output, more collaboration, and larger-scale use are pushed up the pricing ladder.

The third limitation is that realistic voice AI still depends heavily on input quality and workflow discipline. ElevenLabs’ own guidance shows that cloning quality rises significantly with cleaner, longer source audio.

And finally, the platform’s expansion into many categories means some users will only need a fraction of what they are paying for. If you do not need cloning, dubbing, transcription, or APIs, a narrower tool may be simpler.

ElevenLabs is one of the strongest AI audio platforms available right now because it combines high-quality speech generation with practical production tools: cloning, dubbing, transcription, cleanup, and developer infrastructure.

It is best for creators, publishers, localization teams, and product teams that need more than a basic narrator. The main caveat is that it has outgrown the “simple voice generator” category, so the platform is most worth it when you will actually use that deeper stack.

TAGS: Text to Speech

Related Tools:

Converts written content into audio podcasts

Converts texts to podcasts

Word processing tool for creating and editing documents

AI voice assistant that understands natural speech

Transforms written texts to voiceovers

Offers features for document editing and file management

Related Videos:

Dubbing Your Video into Multiple Languages with AI - Eleven Labs vs Dubverse vs Speechify

Expand Your Reach with AI Video Dubbing! In this video, we explore three powerful AI tools that allow you to dub your videos into multiple languages, helping you reach a wider global audience.

This Makes Voiceover Work Too Easy!

I will show you step-by step how to use Elevenlabs Voiceover Studio. It's an incredible tool that will supercharge your voiveover work.