Description:

- Introduction

- What 11AI Actually Is

- What 11AI Does Best

- Strong Features and Capabilities

- The Main Idea: Voice Assistants That Actually Do Things

- MCP Integration Is the Core Technical Advantage

- Workflow and Ease of Use

- Voice Quality and Personalization

- How 11AI Fits Into ElevenLabs’ Broader Platform

- Best Use Cases

- Limitations and Trade-Offs

- Final Takeaway

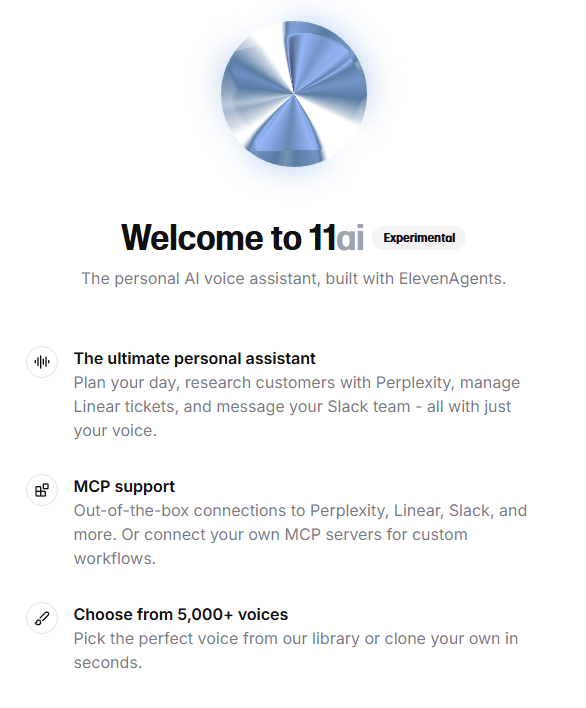

11ai is ElevenLabs’ experimental voice-first AI assistant for productivity, tool use, and action-taking workflows. It is not just a chatbot with a microphone button. The product is designed to let users speak naturally, connect everyday tools through MCP, choose or clone a voice, and ask the assistant to complete work across apps like Slack, Linear, Notion, Perplexity, Google Calendar, and custom MCP servers.

11ai is a personal AI voice assistant from ElevenLabs. It launched in alpha as a proof of concept for what can be built with ElevenLabs Conversational AI, especially when low-latency voice interaction is combined with external tool access through the Model Context Protocol.

The simplest way to understand it is this:

| Layer | What it does | Why it matters |

|---|---|---|

| Voice assistant layer | Lets you talk to an AI assistant naturally | Makes the experience feel closer to delegation than typing prompts |

| MCP integration layer | Connects 11ai to external tools and APIs | Allows the assistant to do useful work, not just answer questions |

| Personalization layer | Lets you choose from many ElevenLabs voices or create a custom voice clone | Makes the assistant feel less generic and more personal |

| Conversational AI layer | Uses ElevenLabs’ low-latency voice agent technology | Keeps spoken interaction responsive enough for real conversations |

That combination is the product’s real point. 11ai is not trying to replace every chatbot or every automation platform. It is trying to show what happens when an AI assistant can speak naturally, understand context, and take sequential actions across connected tools.

11ai is strongest when you want to move work through voice instead of typing commands into separate apps.

The launch examples are practical: planning your day and adding priority tasks to Linear, using Perplexity to research a prospect meeting, searching Linear issues and creating a follow-up ticket, or catching up on Slack messages from an engineering channel.

Those examples reveal the real use case. 11ai is not just for asking “what is the weather?” or “summarize this topic.” It is more interesting when the assistant has connected tools, enough context to understand the task, and permission to take action.

That makes it useful for founders who want to manage tasks while moving quickly, product teams that live in Linear, Slack, and Notion, sales teams that need quick prospect research before calls, operators who want voice-driven follow-up across systems, and developers and teams experimenting with MCP-based assistant workflows.

The important word is “workflow.” 11ai becomes more useful when it is connected to the tools where work already happens.

11ai is designed around spoken conversations rather than text-first prompting, making it better suited for planning, updates, and hands-free task delegation.

It uses the Model Context Protocol to connect with external tools through a standardized integration layer.

ElevenLabs lists integrations such as Perplexity, Linear, Slack, and Notion, with more integrations planned over time.

Teams can connect internal tools or specialized software through their own MCP servers.

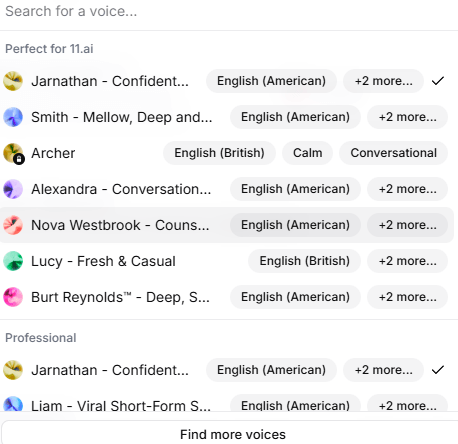

Users can select from over 5,000 ElevenLabs voices or create a custom voice clone for the assistant.

The platform uses ElevenLabs’ voice agent technology, including low-latency interaction, multimodal support, RAG, language detection, and enterprise security positioning.

The most important thing about 11ai is the shift from answering to acting.

Traditional voice assistants have usually been limited. They can answer questions, set timers, play music, or do basic device actions, but they often fail when asked to complete meaningful work across modern apps. ElevenLabs frames 11ai as a response to that limitation: a voice assistant that can connect to everyday tools through MCP and take sequential actions.

That is the right direction. The problem with many AI assistants is not intelligence alone. It is disconnectedness. A smart assistant that cannot access your project tracker, messages, calendar, CRM, notes, or internal tools quickly becomes another tab to manage.

11ai’s bet is that voice becomes more powerful when the assistant can reach into those systems. For example, “catch me up on Slack” is not just a summarization task. It requires access, channel awareness, permission control, context selection, and a useful response. “Create a follow-up ticket in Linear” requires understanding the issue, selecting the right project or team, and writing a useful task.

That is where 11ai feels more like a productivity assistant than a voice chatbot.

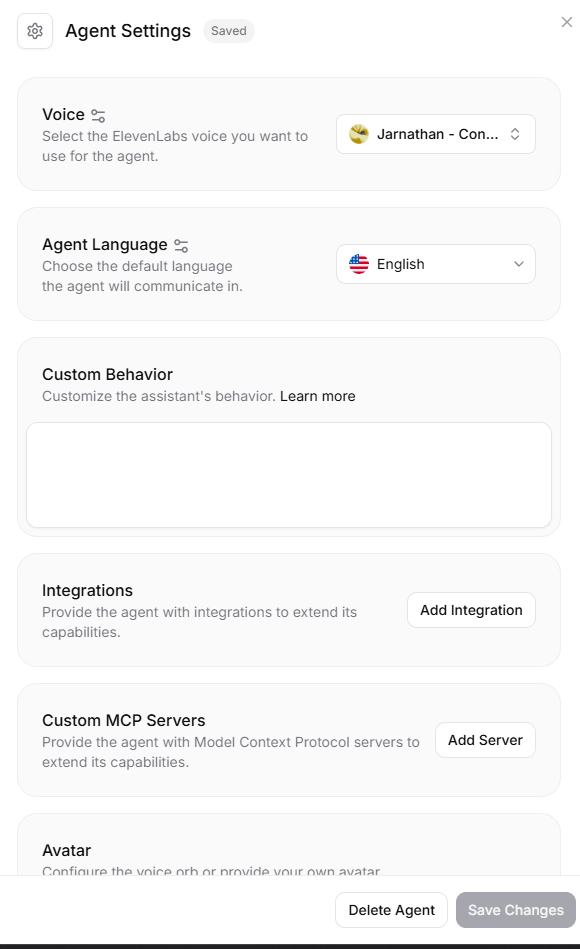

MCP is central to 11ai’s value. ElevenLabs describes MCP as a standardized way for AI assistants to integrate with external APIs through a uniform protocol. Its launch post says ElevenLabs Conversational AI has built-in support for MCP, allowing agents to connect to services such as Salesforce, HubSpot, Gmail, Zapier, and more.

This matters because integrations are often the hardest part of building useful assistants. Without integrations, an assistant can only talk. With integrations, it can read, update, create, search, route, and trigger actions.

11ai currently highlights ready-made integrations for Perplexity, Linear, Slack, and Notion. Those are good early choices because they cover research, project management, team communication, and knowledge/task management.

The custom MCP server support is just as important. Many serious teams do not run only on popular SaaS tools. They have internal dashboards, custom admin panels, private databases, support systems, and proprietary workflows. The ability to connect custom MCP servers gives 11ai a path beyond generic personal assistant use.

The 11ai setup flow is conceptually simple: sign up, choose a voice, connect tools, and start a conversation. ElevenLabs’ launch article lists those steps directly, including connecting Google Calendar, Linear, Slack, Perplexity, and custom MCP servers.

That simplicity is important because voice assistants need to feel immediate. If setup is too complex, people will not use voice as a daily habit.

In real use, the learning curve will likely come from permissions and workflow design. Users need to decide which tools the assistant can access, what actions it is allowed to take, and how much autonomy feels safe. ElevenLabs says MCP connections can be configured with appropriate permissions, giving users control over what the assistant can and cannot do.

That permission layer is essential. A voice assistant that can update a CRM, send a message, create a task, or search private tools needs clear boundaries. The best 11ai setup will not be “connect everything and hope.” It will be careful, narrow, and workflow-specific.

Because 11ai comes from ElevenLabs, voice quality is one of its natural strengths.

ElevenLabs’ broader platform is built around ultra-realistic speech, voice cloning, voice design, text-to-speech, agents, speech-to-text, and a large voice library. The company’s main site describes more than 5,000 voices, 70+ language support in its broader platform, and agent workflows that can talk, type, and take action.

For 11ai specifically, users can choose from over 5,000 voices or create a custom clone that sounds like them.

That personalization is not just cosmetic. A personal assistant is used repeatedly. The voice affects whether the assistant feels helpful, distracting, pleasant, or fatiguing. A generic robotic voice can make even a smart assistant feel cold. A natural voice makes spoken interaction easier to tolerate over longer sessions.

The custom voice option also changes the emotional feel of the product. Some users may want an assistant that sounds like a neutral professional. Others may want something more personal, branded, or familiar. Teams building internal demos may also use custom voices to prototype different assistant personas.

11ai sits at the intersection of ElevenLabs’ creative voice technology and its agent infrastructure.

ElevenLabs’ current platform is organized around ElevenCreative, ElevenAgents, and ElevenAPI. ElevenAgents is positioned around agents that can talk, type, and take action across phone, chat, email, and WhatsApp, with analytics, testing, guardrails, workflows, and business logic.

11ai feels like the personal productivity version of that same direction. Instead of building a customer support agent for a business, you are experimenting with a personal assistant that can connect to your tools and help you act across your workday.

This is useful because it means 11ai is not an isolated novelty. It is a demonstration of the same underlying voice-agent stack ElevenLabs is building for developers and enterprises. The launch post says exactly that: 11ai is a proof of concept showcasing what developers can build with ElevenLabs Conversational AI.

- Daily planning: Ask 11ai to review your day, summarize priorities, and create or update tasks in connected tools.

- Project management: Use it to search project issues, create follow-up tasks, and pull context from tools like Linear and Notion.

- Team catch-up: Ask for summaries of Slack channels, project updates, or missed conversations.

- Sales and customer research: Use Perplexity and connected systems to prepare for prospect meetings or customer calls.

- Personal operations: Turn spoken instructions into follow-up actions across tools, notes, calendars, and task systems.

- Internal workflow experiments: Test what MCP-based voice agents could do with custom servers and company-specific systems.

- The first limitation is maturity. 11ai launched as an alpha and proof of concept, so it should be judged as an experimental assistant rather than a fully settled productivity platform.

- The second limitation is integration dependence. 11ai becomes much more useful when connected to tools, but that also means setup, permissions, and workflow boundaries matter more than they do in a simple chatbot.

- The third limitation is trust. A voice assistant that can create tasks, search messages, update records, or interact with internal systems needs careful permissioning and review, especially in team environments.

- The fourth limitation is that voice is not always the best interface. Voice is excellent for planning, catch-up, quick delegation, and hands-free workflows, but text may still be better for dense editing, precise prompts, complex review, and sensitive actions.

11ai is best understood as ElevenLabs’ voice-first assistant experiment for tool-connected productivity. Its strongest idea is not just natural speech. It is natural speech plus connected workflows through MCP.

It is most useful for people and teams that want to speak tasks into motion across tools like Slack, Linear, Notion, Perplexity, Google Calendar, and custom systems. The main caveat is that 11ai is still an alpha-style proof of concept, so its value depends heavily on the connected tools, permissions, and workflows you build around it.

The product is interesting because it points toward where voice assistants are going: not just answering questions, but taking action inside the systems where work already happens.

TAGS: Text to Speech

Related Tools:

Generates synchronized audio for video scenes

Digital document management platform

Delivers real-time, hyper-realistic voice generation

Converts text to realistic speech

Suite of productivity tools for macOS and iOS devices

Transforms voices into professional performances for media projects