Description:

Manus is closer to “assign work to an agent” than “chat with a bot.” The product is built around execution: researching, building, editing, browsing, and producing finished outputs like websites, reports, slides, and automated workflows. That makes it more interesting for task-heavy users than for people who only want quick conversational answers.

The key idea is simple: Manus is strongest when the goal is not just to get an answer, but to get actual work done. That shift changes how the tool should be tested. The best prompts are usually not simple questions. They are assignments with a deliverable at the end.

Manus’s own documentation describes it as an autonomous general AI agent that can plan, execute, and deliver complete work products from start to finish. That is the central idea of the product, and it changes what kinds of tasks it is best for.

Manus promotes itself as an AI website builder that can create full-stack websites and web apps from plain-English requests. The docs also describe workflows that include backend, database, authentication, text generation, and image generation.

Manus has a dedicated Wide Research workflow and showcases official research use cases, including product analysis, market mapping, and structured data collection. That makes research one of its most credible practical strengths.

Agent Skills are one of the platform’s more interesting features. Manus describes them as modular resources that package capability, workflow instructions, and context so the agent can perform tasks more consistently. It also emphasizes one-click import/export and team scaling.

Manus is not limited to text inputs. Its product lineup includes a browser operator, and its newer workspace updates highlight precise Google Docs editing and other hands-on document actions.

The My Computer feature works via command-line execution, allowing Manus to read, analyze, and edit local files and launch local apps. That makes it notably more operational than assistants that stay inside chat.

A Manus engineering post says a typical task in Manus requires around 50 tool calls on average, which helps explain why the product is aimed more at substantial agent workflows than lightweight chat.

Manus makes more sense when you choose the workflow based on the job rather than treating every task like a chat prompt.

- Wide Research: best when you want competitor mapping, market scans, structured research, or report-ready findings.

- Website / app building: best when the goal is a real build output, not just wireframe ideas or code snippets.

- Browser operator: best when the task depends on visiting websites, inspecting live pages, or collecting structured information from the web.

- Skills: best when you expect to repeat the same workflow and want more consistency across runs or across a team.

- My Computer: best when the task depends on local files, command-line work, or editing material outside a normal chat flow.

- Workspace / document editing: best when the goal is to revise, clean up, or update an existing document with more control than simple rewrite prompts.

This is one of the biggest differences between Manus and a standard assistant. The tool becomes more useful as the task becomes more operational, multi-step, and output-driven.

Best workflow: Wide Research

Prompt: Research the top AI website builders for small businesses. Compare pricing, strengths, weaknesses, target users, and standout features. Then turn the findings into a clean report with a summary, comparison table, and final recommendation.

Why this is a good first test: Manus leans heavily into “do the work and deliver the output,” and research plus structured reporting is one of its clearest fits. Manus also promotes Wide Research and report-style workflows directly.

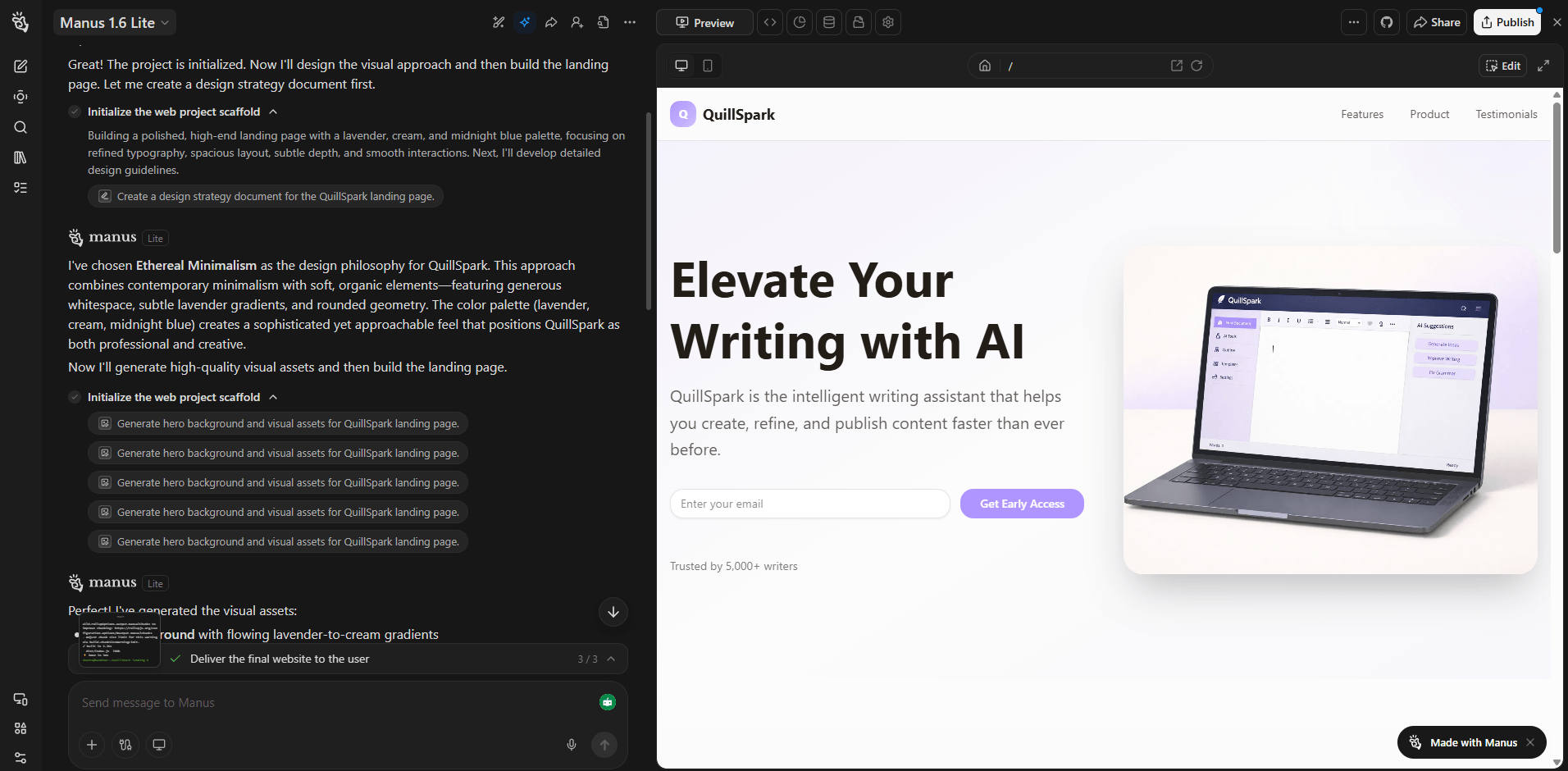

Best workflow: Website / app builder

Prompt: Create a landing page for a modern productivity app. Include a hero section, features, pricing, testimonials, FAQ, and call-to-action. Make the design clean and modern, and generate the full site structure so it is ready to preview.

Why it matters: Manus explicitly positions itself as able to turn plain-language instructions into full-stack websites and web apps, not just mockups.

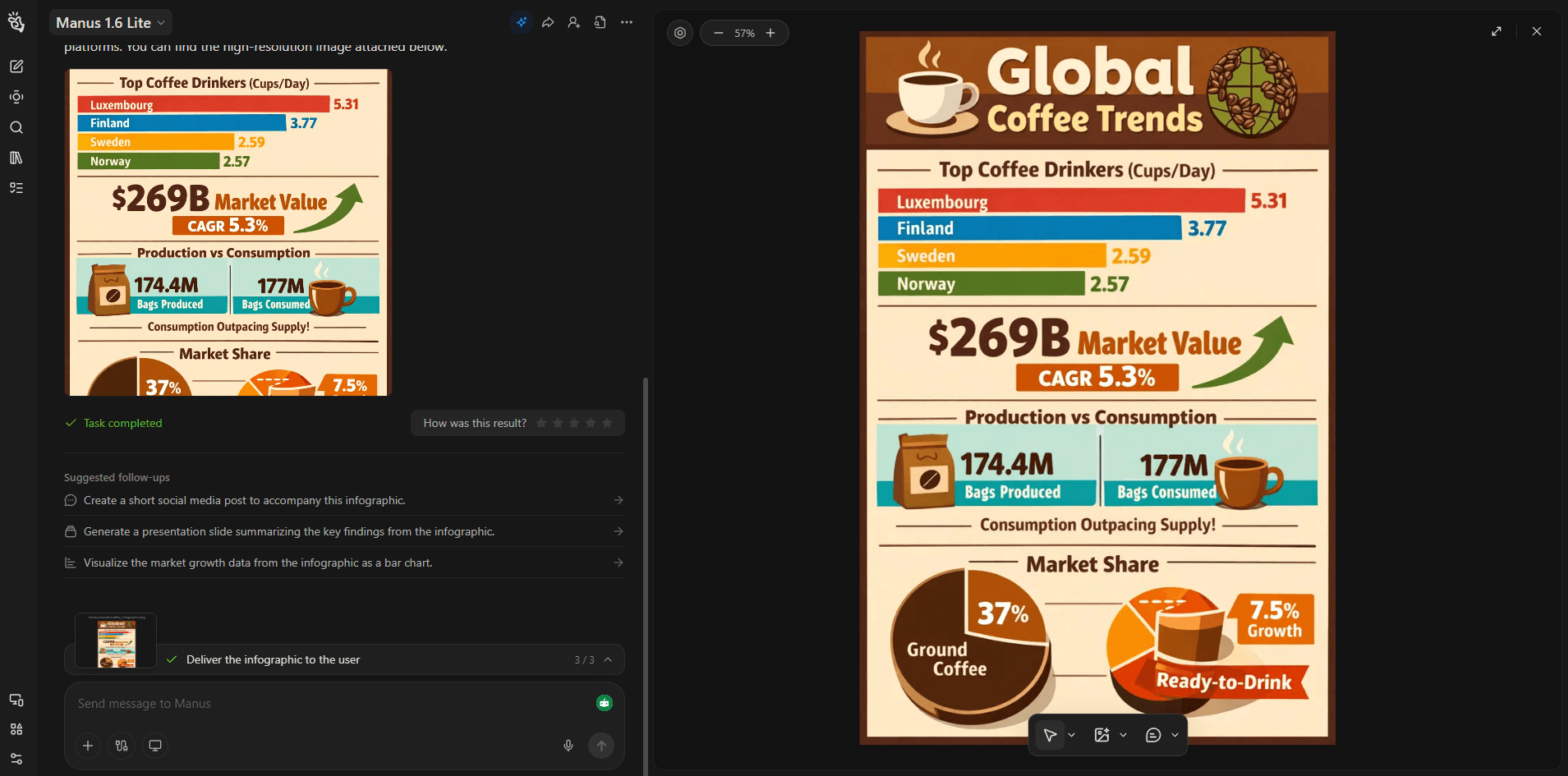

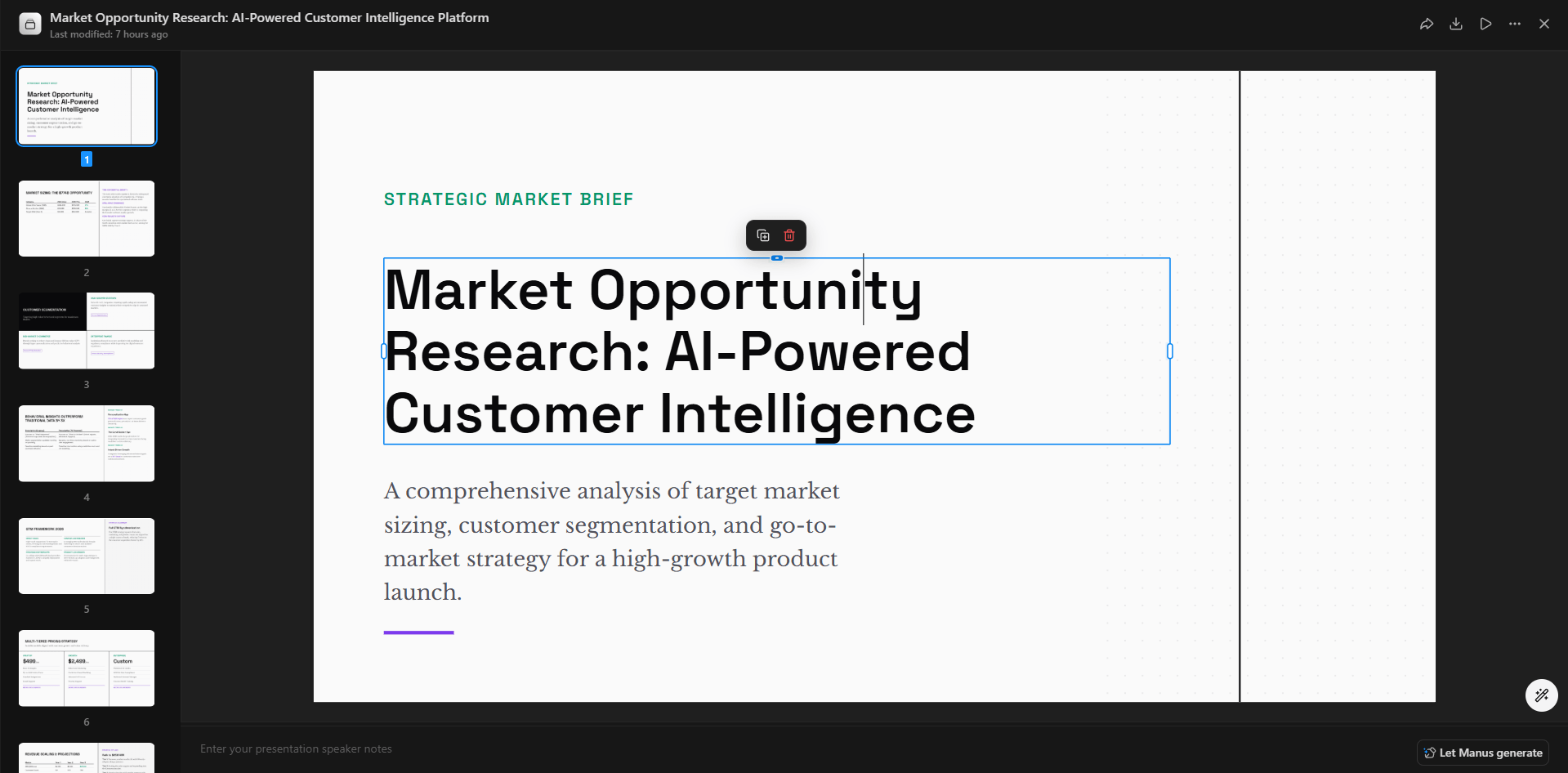

Best workflow: Research + slide creation

Prompt: Research the current state of AI video tools for marketers, then create a 10-slide presentation with concise slide titles, key points, and a logical flow for a business audience.

Why this belongs early: Manus promotes AI slide creation as a direct product workflow, so this is a more natural test than a generic writing prompt.

Best workflow: Browser operator

Prompt: Visit the websites of three competitors in the online course space. Capture their positioning, pricing, page structure, and lead-generation tactics, then summarize the patterns in one document.

Why this is useful: Manus includes a browser operator workflow, which makes web-based research and observation one of its more distinctive practical uses.

Best workflow: Skills

Prompt: Create an Agent Skill for writing product comparison reports. It should define the research steps, output format, tone, and required sections so my team can reuse it for future reports.

Why this is a strong test: Skills are one of Manus’s clearest differentiators. The platform emphasizes reusable workflows that scale team knowledge beyond one-off prompting.

Best workflow: My Computer

Before using this prompt: Make sure the local files you want Manus to inspect are available in the environment it can access.

Prompt: Analyze these local files, identify the most important information, reorganize the messy parts, and prepare a cleaner working version I can continue from.

Why it matters: Manus’s My Computer capability is built around terminal-based interaction with local files and applications, which makes it more action-oriented than a standard chat assistant.

Best workflow: Multi-step planning + research

Prompt: I want to launch a niche content website. Research the market, suggest the positioning, outline the site structure, draft the first content ideas, and give me a practical launch plan from setup to first promotion steps.

Why this is a good fit: This prompt reflects Manus’s multi-stage strength. It is better suited to jobs that combine research, planning, and output creation than to one-step questions.

Best workflow: Workspace / document editing

Before using this prompt: Open the Google Doc or workspace document you want revised first.

Prompt: Take this Google Doc and update the introduction, rewrite the weak sections, improve the headings, and clean up repeated wording without changing the overall purpose of the document.

Why this is a smart test: Manus recently added more granular document editing inside Google Docs and workspace workflows, which makes it more useful for real revision work than tools that only append text.

- Market and competitor research that needs a finished report: one of Manus’s clearest strengths because it is designed to return deliverables, not just findings.

- Website or web app generation from natural-language instructions: stronger fit when the goal is an actual build output rather than only a concept or draft.

- Turning research into slides or structured deliverables: especially useful for business-facing outputs that need a cleaner end product.

- Browser-based business research and workflow automation: more practical here than with assistants that cannot act on the web directly.

- Reusable internal workflows through Skills: valuable when teams want more consistent repeated execution.

- File-heavy and document-heavy work that needs actual edits: more compelling when the task involves revising, restructuring, or cleaning up real material.

- Longer, multi-step assignments where execution matters more than fast conversation: this is the product category where Manus makes the most sense.

- Give Manus an actual assignment, not just a question. It is better suited to “research this and deliver a report” than “tell me about this topic.” This is an inference based on Manus’s agent-first design and product positioning.

- Ask for a deliverable format up front, such as report, slide deck, website, table, or action plan. Manus’s product surface is built around outputs.

- Use Skills when you expect to repeat the workflow. That is one of the best ways to make Manus more consistent over time.

- Use it for work that benefits from tool use and execution, not just brainstorming. Manus becomes more interesting as the task gets more operational. This is an inference grounded in its official documentation.

Manus is more ambitious than a normal chat assistant, which is also where the trade-off appears. Tasks that involve many steps, tools, and outputs can be more powerful, but they are also heavier than simply asking a quick question. Manus’s own engineering notes about long tool-call loops reinforce that this is a system designed for substantial tasks, not just instant chat replies.

It also appears to make the most sense for users who value workflow execution, research, deliverables, and automation. If someone mainly wants casual conversation or a general-purpose chatbot for light everyday prompts, Manus may feel like a different category of product entirely. That is an inference from the way Manus presents its tools and agent workflows.

Manus is most interesting when you stop thinking of it as a chatbot and start treating it like a work agent. Its strongest appeal is not personality or casual conversation, but the ability to research, build, edit, browse, and produce finished outputs across longer workflows. For users who want AI to take on more of the task itself, Manus stands out for exactly that reason.

TAGS: AI Automation AI Chat/Assistant

Related Tools:

Allows user to interact with documents

AI chatbot enhancing website visitor engagement

Creates custom browser extensions and web automations

AI-driven platform that handles tasks

Generates images from text and provides conversational assistance

Creates intelligent agents to automate tasks